Docker

A geeky post. You have been warned.

A geeky post. You have been warned.

I wanted to make a brief reference to my favourite new technology: Docker. It's brief because this is far too big a topic to cover in a single post, but if you're involved in any kind of Linux development activity, then trust me, you want to know about this.

What is Docker?

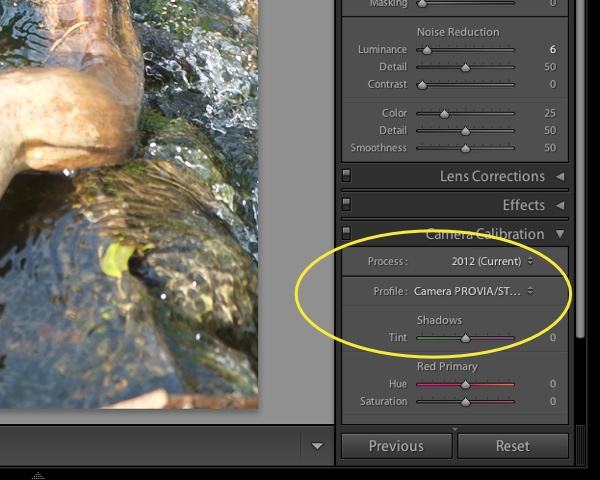

Docker is a new and friendly packaging of a collection of existing technologies in the Linux kernel. As a crude first approximation, a Docker 'container' is like a very lightweight virtual machine. Something between virtualenv and VirtualBox. Or, as somebody very aptly put it, "chroot on steroids". It makes use of LXC (Linux Containers), cgroups, kernel namespaces and AUFS to give you most of the benefit of running several separate machines, but they are all in fact using the same kernel, and some of the operating system facilities, of the host. The separation is good enough that you can, for example, run a basic Ubuntu 12.04, and Ubuntu 14.04, and a Suse environment, all on a Centos server.

"Fine", you may well say, "but I can do all this with VirtualBox, or VMWare, or Xen - why would I need Docker?"

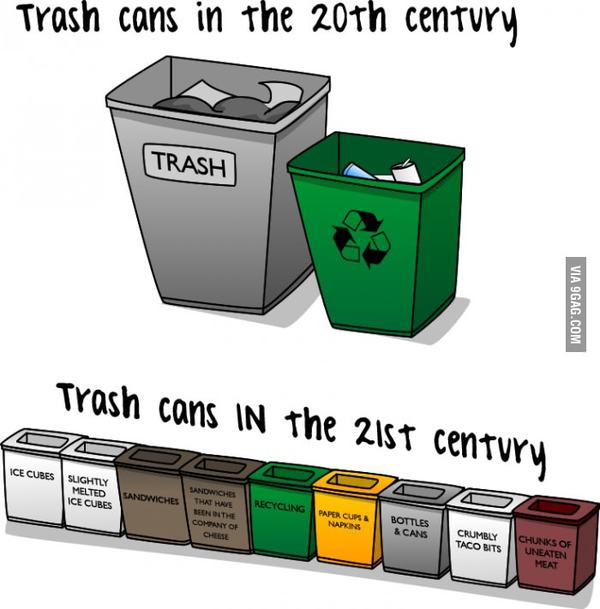

Well, the difference is that Docker containers typically start up in milliseconds instead of seconds, and more importantly, they are so lightweight that you can run hundreds of them on a typical machine, where using full VMs you would probably grind to a halt after about half a dozen. (This is mostly because the separate worlds are, in fact, groups of processes within the same kernel: you don't need to set aside a gigabyte of memory for each container, for example.)

Docker has been around for about a year and a half, but it's getting a lot of attention at present partly because it has just hit version 1.0 and been declared ready for production use, and partly because, at the first DockerCon conference, held just a couple of weeks ago, several large players like Rackspace and Spotify revealed how they were already using and supporting the technology.

Add to this the boot2docker project which makes this all rather nice and efficient to use even if you're on a Mac or Windows, and you can see why I'm taking time out of my Sunday afternoon to tell you about it!

I'll write more about this in future, but I think this is going to be rather big. For more information, look at the Docker site, search YouTube for Docker-related videos, and take a look at the Docker blog.

From the "Things I should patent but probably won't" department...

From the "Things I should patent but probably won't" department...

It occurs to me that the Book of Job has a persuasive argument for space travel.

It occurs to me that the Book of Job has a persuasive argument for space travel.